Story Highlight

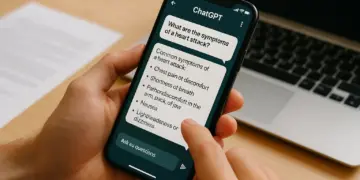

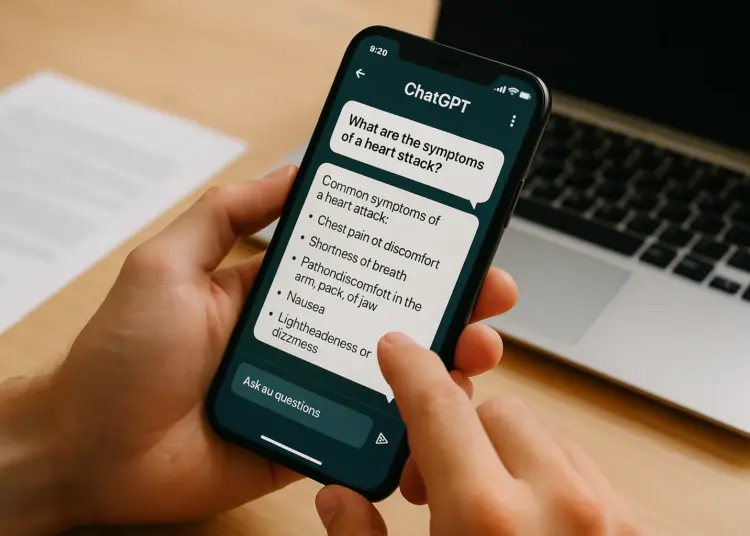

– Users turn to ChatGPT for understandable health information.

– Over 40 million daily users seek health advice from AI.

– Chatbots can track user queries for personalized responses.

– AI may prioritize helpfulness over accuracy and reliability.

– Data privacy risks necessitate caution in using AI for health.

Full Story

Alexandra Watson, who has been managing a heart condition, turns to an unconventional resource for health-related inquiries—ChatGPT, affectionately referred to as ‘Chad.’ This large language model (LLM) has become Watson’s first point of contact when she has questions about her health. Describing her experience with the tool, she believes it “cuts through the noise” and delivers information that is both clear and accessible. “I couldn’t get my cardiologist to spend this time talking me through every question I have on the subject,” she explains. The AI, she says, offers a platform for deeper discussions and hypothetical scenarios that she often feels dismissed by traditional medical consultations. “Doctors are dismissive, Google just scares you, but Chad is helpful,” she adds.

By January, OpenAI, the company behind ChatGPT, reported an impressive statistic: more than 40 million individuals engage with the chatbot daily for health-related guidance, representing over 5% of all messages sent through the service worldwide. Last year, Healthwatch, an organisation focused on healthcare improvement, highlighted that 9% of men and 7% of women in England have opted to consult AI chatbots for medical queries.

For Watson, one advantage is ChatGPT’s ability to retain a history of her interactions, allowing for more tailored responses when she asks subsequent health-related questions. The chatbot references her previous queries about heart issues, which enhances the continuity of care in a manner she likens to an ongoing conversation. Despite appreciating its supportive tones, she acknowledges that the AI tends to put a positive spin on things. For instance, when she sought advice on suitable diets, it prompted her to be “kind to myself” following an operation almost two years ago and recommended that she “take it easy” during menopause.

Carole Railton, another user of the platform, echoed Watson’s sentiments about ChatGPT. Finding herself using the chatbot almost daily for professional and personal tasks, including travel arrangements, Railton has also begun to rely on it for medical insights due to her heart condition. When faced with new symptoms, she felt that traditional medical check-ups often felt superficial, prompting her to consult ChatGPT first. The AI proved helpful during her travel planning, reminding her to secure a “fit to fly” note essential for flying with her medication. “If a human was as knowledgeable and as nice, I would make a beeline for them,” she remarked in reference to the chatbot’s affable nature.

This surge in the chatbot’s popularity for health-related advice may stem from its user-friendly approach, which some users find less alarming compared to conventional search engines like Google. Additionally, with the increasing difficulty in securing timely appointments with general practitioners (GPs), many find solace in the accessibility of such tools. Yet, caution is warranted: the majority of these AI applications were designed without a primary focus on medical consultation. Their terms and conditions often include disclaimers stating they are “not intended for use in the diagnosis or treatment of any health condition.” A recent study from Stanford and Berkeley noted a concerning trend—between 2022 and 2025, the number of disclaimers and health warnings given decreased dramatically, from 26.3% to just under 1%.

It’s important to note that chatbots are not infallible. They have been known to generate inaccuracies or misleading information, colloquially termed “hallucinations.” For example, a report from an American medical journal detailed the case of an individual who, after consulting ChatGPT about dietary changes, mistakenly substituted salt with sodium bromide, leading to severe psychiatric issues. Such incidents raise legitimate concerns regarding the reliability of AI-generated health guidance.

Data privacy is another critical issue that remains largely unaddressed. The convenience of using AI tools often overshadows concerns over the nature of the information being shared. A pressing query arises: what becomes of the health data users willingly disclose to tech companies? This consideration leads to a clarion call for users to approach AI platforms with increased caution.

In the context of American healthcare, OpenAI has positioned ChatGPT as a promising ally, designed to assist patients in navigating the often complex medical system. In January, the company introduced a feature known as ChatGPT Health, allowing a select group of users to integrate their medical data, such as records from Apple Health or MyFitnessPal, for more personalized interactions. This feature was accompanied by an assurance that such information would be kept separate from other data. However, it remains unavailable in the UK and European Economic Area, largely due to stringent digital privacy laws.

A research initiative published in Nature Medicine examined ChatGPT’s performance in various medical scenarios, revealing a mixed ability to provide appropriate guidance. The study found that in slightly over half of the instances where urgent hospital visits were warranted, the chatbot incorrectly suggested users stay home or wait for a routine appointment. Lead researcher Ashwin Ramaswamy expressed concerns, stating, “ChatGPT Health is most reliable when the clinical decision is least consequential, and least reliable when it matters most.”

OpenAI responded to inquiries about the study by underscoring their commitment to improving the tool and maintaining that the findings may not accurately represent typical usage or the tools’ intended function.

While the quest for health information online is not a novel phenomenon, the conversational style of LLMs differs from traditional informational searches. Dr Sonia Szamocki, an ex-NHS physician and current CEO of health tech firm 32Co, emphasized that this development reflects a broader accessibility issue concerning medical information. “What people are trying to solve is not a new problem, which is that it’s hard to get access to doctors,” she acknowledges.

Chatbots, including those like ChatGPT, function through pattern recognition rather than strict authoritative knowledge. Szamocki elucidates that these AI models do not guarantee absolute accuracy, urging users to maintain a healthy skepticism toward the information provided. Additionally, the manner in which users frame their inquiries is crucial, as it may inadvertently skew the information received. Dr Caroline Pilot, the acting chief medical officer for the digital clinic HealthHero, noted that health consultations require a nuanced approach, wherein clinicians elicit comprehensive patient histories rather than solely relying on what the user deems important.

Attitudes among healthcare professionals toward the rise of AI in medical inquiries vary. Dr Pilot notes that while some clinicians express concern, others view it as an opportunity to engage with patients who are increasingly informed. Professor Victoria Tzortziou-Brown, chair of the Royal College of General Practitioners, commends the curiosity exhibited by patients in seeking health information. Despite this, she cautions that the risks associated with chatbot advice necessitate vigilance regarding the reliability of the information provided.

Ultimately, while there is significant potential for AI technologies to enhance patient support, they should operate alongside, and not replace, the expertise of qualified healthcare providers. The integration of AI in health consultations should serve as a supplementary resource rather than a primary source of medical advice.

Our Thoughts

The article highlights the increasing reliance on AI chatbots like ChatGPT for medical advice, which raises significant safety concerns. To mitigate risks, clearer guidelines and better education on the limitations of such technologies are essential. Key lessons include ensuring users understand that AI chatbots are not a substitute for professional medical advice, as stated under Regulation 4 of the UK Management of Health and Safety at Work Regulations 1999, which requires suitable training and information.

Regulations breached include the lack of adherence to guidelines emphasizing the importance of accurate and evidence-based medical information. Additionally, the potential for data privacy breaches under the UK General Data Protection Regulation (UK GDPR) underscores the need for stricter controls over how personal health data is managed by AI systems.

Preventive measures should involve implementing robust verification protocols for AI-generated advice, reinforcing the importance of consulting healthcare professionals for serious health issues, and ensuring that users are aware of privacy implications. Regular audits of AI chatbots and their compliance with health advisory standards could significantly reduce the risk of misinformation leading to adverse health outcomes.