Story Highlight

– Jury finds Meta and YouTube liable for mental health damages.

– Kaley’s addiction began at age six, causing severe issues.

– Meta held 70% responsibility; $6 million damages awarded.

– Companies plan to appeal verdict; deny connection to addiction.

– Case may influence future social media litigation and regulations.

Full Story

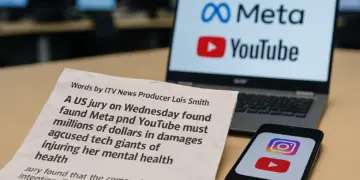

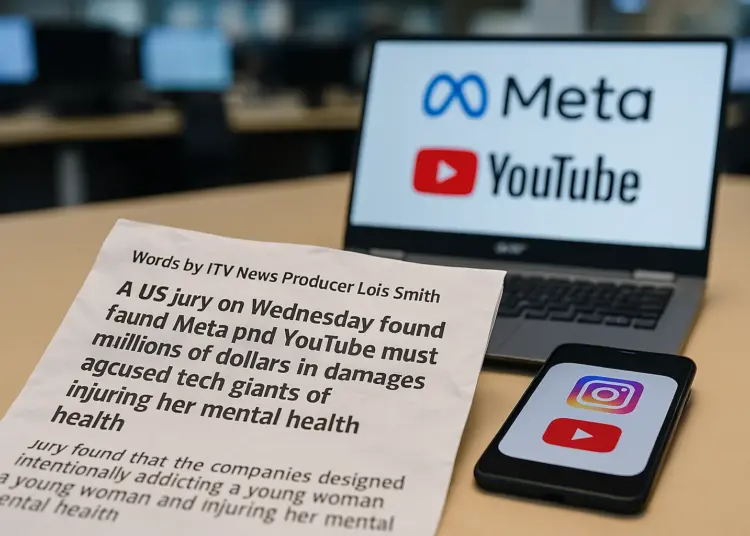

A jury in the United States has delivered a significant verdict against Meta and YouTube after finding the companies liable for damages related to the mental health decline of a young woman due to her use of their platforms. The case, which garnered attention for being unprecedented in its focus on corporate responsibility regarding childhood addiction to social media, has far-reaching implications not only in America but possibly in the UK as well.

The plaintiff, identified as Kaley, and her mother initiated the lawsuit against the two tech giants, claiming that the addictive nature of their platforms resulted in severe consequences for Kaley’s mental well-being. The jury concluded that both Meta, which operates Facebook, Instagram, and WhatsApp, and Google, which owns YouTube, were negligent in their approach to platform design, putting profit ahead of the health of their younger users.

The opening statements of the trial underscored the assertion that both companies had engineered their platforms to create addictive behaviours among children. Mark Lanier, the attorney representing Kaley, argued that these tech firms had exploited their knowledge of human psychology to ensnare young users. “Two of the richest corporations in history have engineered addiction in children’s brains,” he stated emphatically during the trial.

Kaley recounted her experiences with social media, revealing she began using YouTube at the tender age of six and Instagram at nine. Her mother echoed these sentiments, testifying about the harmful effects these platforms had on her daughter, which included anxiety, body dysmorphia, and thoughts of self-harm. Initially, Snap and TikTok were also named as defendants in the lawsuit; however, both reached settlements prior to the case going to trial.

The Los Angeles jury determined that the negligence of both Meta and YouTube was a significant factor in causing harm to Kaley. They found that each company was aware of the potential dangers their platforms posed to minors and failed to provide adequate warnings to users. Following deliberations, the jury awarded Kaley $3 million in compensatory damages, along with an additional $3 million in punitive damages aimed at holding the companies accountable for their actions. The jury concluded that Meta was primarily responsible for Kaley’s suffering, attributing 70% of the culpability to them, with YouTube responsible for the remaining 30%.

In response to the verdict, both Meta and YouTube expressed their disagreement, signalling intentions to appeal. Meta argued that the struggles with mental health faced by Kaley were attributable to other factors in her life, including a challenging home environment, stating that “not one of her therapists identified social media as the cause” of her issues. YouTube sought to differentiate itself by characterising its platform as akin to television rather than purely a social media site, and highlighted the various safety features and user controls designed to mitigate risks.

The fallout from this trial may have significant implications, particularly beyond the immediate verdict itself. Lorna Woods, an esteemed professor of Internet Law at the University of Essex, indicated that the ruling could impact future cases. While the current verdict does not impose direct alterations to platform designs or algorithms, it sets a potentially transformative precedent within the legal landscape. Woods articulated that even though the awarded damages may seem modest compared to the billions generated by these companies, the ruling may incentivise a broader shift in corporate behaviour regarding user safety, particularly for minors.

This case is seen as a bellwether, part of a wider wave of litigation that might prompt a shift in how tech companies address the mental health of young users. According to Terry Green, a Deputy Managing Partner at Katten Muchin Rosenman, social media companies may find it prudent to settle outstanding claims as they navigate the repercussions of this verdict.

In the UK, the implications of this case come at a critical time when social media addiction is increasingly under scrutiny. Sir Keir Starmer, leader of the Labour Party, remarked that the UK government would carefully consider the implications of the ruling on child safety and social media usage. Discussions about measures such as banning social media access for those under 16 have gathered momentum, especially following Australia’s recent legislation on the matter.

The UK government has initiated a pilot programme that will include trials of social media bans and digital curfews, involving three hundred teenagers. This initiative aligns with ongoing conversations around digital safety, coinciding with consultations regarding the Online Safety Act, which already imposes obligations on platforms to safeguard children.

The House of Lords has also recently shown support for proposals aiming to prevent children under 16 from accessing social media platforms, reigniting the debate over the duties and responsibilities of tech companies toward their youngest users. While the move has garnered support from various stakeholders, concerns remain about the potential unintended consequences of such bans, which could push young users towards less safe corners of the internet.

As societal awareness and concern regarding the impact of social media on mental health continue to rise, the significance of the US ruling against Meta and YouTube may ripple across legal and legislative landscapes globally, potentially urging other nations, including the UK, to reconsider and reinforce protections for vulnerable users in the digital age.

Our Thoughts

The case highlights critical lapses in the duty of care that tech companies have towards young users, particularly in the design and operation of their platforms. To prevent such incidents, stricter adherence to the Health and Safety at Work etc. Act 1974 is essential, which mandates that employers ensure, as far as reasonably practicable, the safety of users. Companies should implement robust risk assessments identifying potential mental health impacts due to addictive design features like infinite scrolling.

Additionally, the Online Safety Act requires platforms to protect children from harmful content and risks. A failure to provide adequate warnings and protective measures constitutes a breach of this legislation.

Key safety lessons include the necessity for transparency in platform design and the implementation of features that limit excessive use, particularly for minors. Regular evaluations of user engagement practices should be conducted to ensure compliance with safety regulations and consider user wellbeing. Enhanced oversight and regulation by bodies such as Ofcom could further mitigate risks associated with social media use among vulnerable populations.