Story Highlight

– Barry Keoghan faces online abuse impacting his mental health.

– His experience highlights UK laws on online harassment escalation.

– Online criticism can transition from opinion to unlawful behavior.

– Platforms are accountable for managing harmful content responsibly.

– Legal boundaries of online abuse are evolving and tightening.

Full Story

Actor Barry Keoghan has opened up about the impact of online abuse on his mental well-being and how it has led him to limit his presence in public life. His revelations not only shed light on the darker aspects of celebrity culture but also provoke pressing questions regarding the legal boundaries of online commentary, particularly in the context of the UK’s evolving laws surrounding cyberbullying and online safety.

### Keoghan’s Struggles with Online Criticism

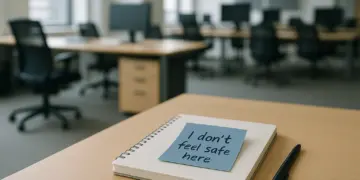

In recent discussions, Keoghan has candidly shared his experiences of enduring harrowing online abuse directed towards his appearance. The actor noted that this relentless scrutiny has significantly undermined his self-esteem, ultimately influencing him to withdraw from public events and reduce his activities on social media platforms. His concerns extend beyond his personal experience; he has also worried about the potential long-term effects on his young son, who may one day face similar online negativity.

This particular scenario exemplifies a broader phenomenon where public figures find themselves at the mercy of relentless scrutiny that often escalates from mere opinion to harmful behaviour. The implications of such abuse can be profound, highlighting the critical need for a better understanding and management of online interactions.

### Legal Implications of Online Abuse

While Keoghan’s situation has not arisen directly within the framework of UK law, it underscores significant legal questions regarding the boundaries of acceptable online discourse. UK law differentiates between free expression and unlawful conduct, guided by legislation that addresses online harassment and abusive messaging.

Despite the absence of a specific law addressing cyberbullying, existing legislation such as the Protection from Harassment Act 1997 and the Malicious Communications Act 1988 plays a critical role in defining illegal online behaviour. These laws target actions that are deemed harassing or that involve communications that are threatening, grossly offensive, or intended to cause distress.

In legal terms, the distinction often hinges on the nature, frequency, and intent behind the communications. A solitary comment is unlikely to be deemed harassment, but repeated, targeted conduct designed to inflict mental distress may clearly fall into unlawful territory. As courts increasingly adapt these laws to address online interactions, there’s a growing recognition of the potential harm inflicted through digital channels.

### Responsibility of Online Platforms

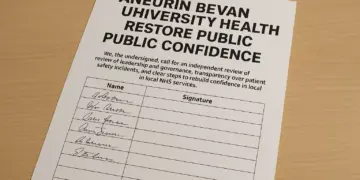

The issue of accountability extends beyond individuals to the platforms that facilitate these communications. Recent discussions indicate a growing emphasis on the responsibility of social media companies in managing online abuse. The 2023 Online Safety Act in the UK places requirements on platforms like Instagram, TikTok, and X to proactively assess risks and implement measures to mitigate exposure to harmful content.

This legislative shift demands enhanced moderation processes, improved responsiveness to user complaints, and established safety protocols. Regulatory oversight, primarily by Ofcom, ensures that these measures are followed. While platforms may not face automatic liability for user-generated content, increased scrutiny is being directed at their role in identifying and addressing patterns of online abuse before significant harm occurs.

The implications for mental health are also coming to the forefront in discussions about online safety. As awareness grows regarding the significant emotional impact of online harassment, it becomes increasingly critical for platforms to tackle harassment seriously. The visibility of harmful patterns creates a scenario where platform inaction could demonstrate negligence.

For companies operating within online spaces, regulators are making clear expectations known: failing to act decisively against harmful behaviour not only brings regulatory consequences but can also damage reputations. This shift further complicates the existing landscape of online communication, particularly where the delineation between free speech and harmful conduct remains inconsistent.

### The Evolving Framework for Online Abuse

Keoghan’s experience is emblematic of a larger trend in how online behaviour is perceived by both legal authorities and society at large. There is a mounting focus on accountability, with expectations for public figures to be shielded from harmful behaviour gradually solidifying. Legal precedents set by high-profile cases are likely to continue influencing the application of laws governing online interactions.

While free expression remains fundamental, the line that separates opinion from harassment is undergoing a transformation as legal frameworks adapt to contemporary challenges. The ongoing consequences brought forth by online abuse underscore that this is no longer merely a cultural issue; it constitutes a vital legal concern requiring attention and action.

### In Summary

The insights offered by Barry Keoghan highlight critical themes surrounding the nature of online discourse and the legal responsibilities associated with it. As conversations around online abuse gain momentum, both public figures and platform operators must engage with the complexities of protecting individuals while safeguarding the principle of free expression.

The ongoing dialogue about the treatment of online behaviour under UK law is ongoing, and clarity regarding the legal boundaries will only increase in importance as our digital interactions continue to evolve.

Our Thoughts

The article highlights the detrimental effects of online abuse faced by actor Barry Keoghan, raising critical issues around legal responsibilities and mental health impacts. To prevent such incidents, a stronger focus on moderation and proactive safety measures is essential for social media platforms. Under the UK’s Online Safety Act 2023, platforms must enhance their moderation systems and enforce effective reporting mechanisms to address harmful content adequately.

Key lessons include the necessity for platforms to recognize and respond to patterns of abuse before they escalate, which can mitigate psychological harm to individuals. Furthermore, ongoing training and awareness regarding the legal distinctions between lawful expression and harassment under the Protection from Harassment Act 1997 and Malicious Communications Act 1988 should be prioritized.

Relevant breaches may occur when platforms fail to act upon repeated and distressing interactions that could be classified as harassment. Compliance with these regulations is crucial not only for legal responsibility but also for the protection of users’ mental health. Ensuring robust safeguards against online abuse can ultimately foster safer digital environments.