Story Highlight

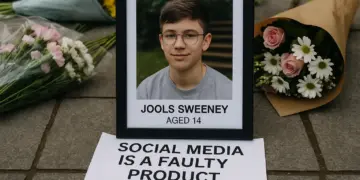

– Young woman claims social media addiction harmed her life.

– Jury finds Meta and YouTube liable for design flaws.

– Verdict signals change for tech industry and children’s safety.

– Governments worldwide begin regulating child social media use.

– Lawsuits against tech firms could reshape addiction accountability.

Full Story

A significant ruling in the recent LA court case has sent ripples through the tech industry, drawing attention to concerns surrounding the addictive nature of social media applications. Central to the case was Kaley, a young woman who began her journey on platforms like YouTube and Instagram at an astonishingly young age—six and nine, respectively. Now at 20, Kaley spoke candidly about her dependency on social media, admitting, “I can’t, it’s too hard to be without it.” Her testimony contributed to a historical verdict that found major tech companies, including Meta and Google, liable for deliberately designing products that encourage addiction among users.

This landmark decision reverberated beyond the courtroom, igniting a sense of hope among families and child advocacy groups that a paradigm shift in the regulation of social media might finally be underway. The jury, comprising five men and seven women, concluded that the design of popular apps like those owned by Mark Zuckerberg’s Meta and Google’s YouTube has led to detrimental mental health outcomes for millions of young people, including Kaley. One juror expressed the sentiment shared by many, stating, “We wanted them to feel it… this was unacceptable.”

The implications of the verdict are profound, marking a potential turning point in the tech landscape, often described as the tech industry’s equivalent of the “big tobacco” moment. The verdict not only highlights the existing issues surrounding social media but also raises questions about the responsibility of tech giants toward their users, particularly vulnerable young people. Following the ruling, shares of both Meta and Alphabet, Google’s parent company, experienced a significant decline.

This verdict comes on the heels of another recent ruling in New Mexico, where Meta was mandated to pay $375 million for misleading consumers regarding the safety of its platforms. The state’s attorney general underscored the gravity of the situation, asserting that the features of these platforms have been implicated in enabling predatory behavior and promoting addictive use among minors. Although the damages awarded in the California case were relatively modest at $6 million, the ramifications of these verdicts could be far-reaching, with many legal experts suggesting this is just the beginning of a larger movement against the tech industry.

As legal challenges mount against various platforms, including Snapchat and TikTok, the focus is increasingly on whether these apps were intentionally crafted to foster addiction. Experts warn that if courts continue to find these tech companies liable, the financial consequences could be immense. In a broader context, governments around the world are also starting to respond with regulatory measures aimed at protecting children. Indonesia has implemented a mandate to deactivate social media accounts of minors under the age of 16, following Australia’s lead. Meanwhile, Brazil has enacted online safety legislation, and in the UK, Prime Minister Keir Starmer echoed the need for enhanced child protection measures, including a potential social media ban for those under 16, as well as restrictions on addictive features.

The geopolitical landscape is evolving in tandem with these court rulings. In the United States, conservative lawmakers are increasingly vocal about the need for greater child protections online, a stark shift from a previously more lenient stance. Matt Kaufman, head of safety at Roblox, noted that other countries are starting to assert their authority in setting robust internet policies, echoing sentiments that resonate beyond US borders.

However, the road ahead is likely to be fraught with challenges. Tech giants are already signaling intentions to appeal the recent decisions, with Meta asserting its disagreement with the jury’s findings. A spokesperson stated, “Teen mental health is profoundly complex and cannot be linked to a single app,” while Google insisted that the case has misconstrued YouTube’s intent as a platform, representing a cautious yet ready resistance to potential systemic changes.

The significance of this landmark case extends to its legal ramifications, shining a light on a new approach toward establishing liability for social media platforms. Historically shielded from accountability due to section 230 of the US Communications Decency Act, which protects them from liability for user-generated content, this ruling marks a shift whereby the platforms themselves can be held responsible for the design decisions that may lead to user harm.

The case’s outcomes may empower further legal challenges as campaigners describe this moment as a clarion call for change in a landscape long dominated by the tech elite. There is a growing sense, as shared by lawyers and advocates, that this case could inspire extensive litigation aimed at holding these companies accountable for harmful practices and drive meaningful reform in their operations and overall business models.

As this situation unfolds, voices from affected families underscore the urgency for immediate action. Ian Russell, whose daughter tragically took her own life after struggling with the mental health impacts of social media, articulated the need for decisive measures that genuinely address the root causes of these issues rather than temporary solutions.

Social media is often positioned as a modern marvel, yet the recent court proceedings have laid bare the darker implications of its pervasive presence in young people’s lives. The dialogue surrounding social media’s role in the mental health crisis is gaining momentum, leading to a heightened scrutiny of how these platforms operate and the responsibilities they bear.

As we move forward, the hope remains that the ongoing evolutions in both public sentiment and legal accountability will propel the necessary changes to ensure the safety and well-being of vulnerable users. Each legal battle brings us closer to addressing a crisis that has simmered beneath the surface of digital interaction, driven by the urgent need for ethical standards that protect our youngest society members from the complex realities of an increasingly connected world.

Our Thoughts

The case highlights significant failures in the design and management of social media platforms, which have resulted in harmful effects on young users. Several lessons can be drawn:

1. **Risk Assessment and Mitigation**: There should have been a thorough risk assessment regarding the addictive features of social media applications. Under the Health and Safety at Work Act 1974, employers have a duty to ensure the safety and wellbeing of users, which necessitates addressing potential psychological risks associated with product design.

2. **User Safety Protocols**: The platforms should have implemented stricter protocols to protect young users from addiction and mental health risks, aligning with the expectations set forth in the Children Act 1989, which prioritizes the welfare of children.

3. **Regulatory Compliance**: The identified breaches suggest inadequate compliance with forthcoming UK regulations aimed at enhancing online safety, such as the Online Safety Bill, which is designed to ensure that digital platforms do not cause harm to users, particularly children.

4. **Education and Awareness**: There is a critical need for increased awareness and educational measures surrounding the impacts of social media use, focusing on vulnerable populations.

Preventative measures could include better regulation of app features that promote compulsive usage and heightened accountability from companies for the psychological impact of their products.